Question 1:

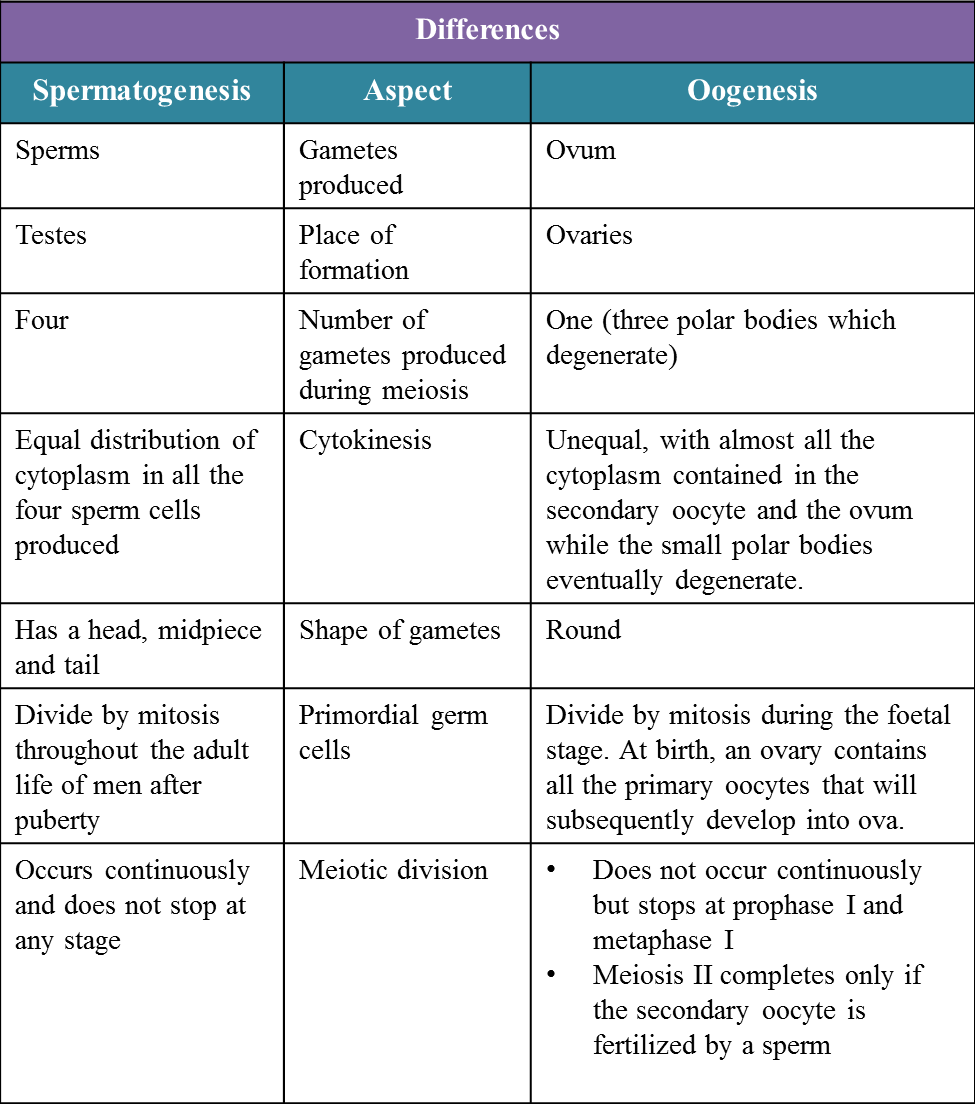

(a) Diagram 1 shows part of a female reproductive system.

The development of zygote occurs in this system.

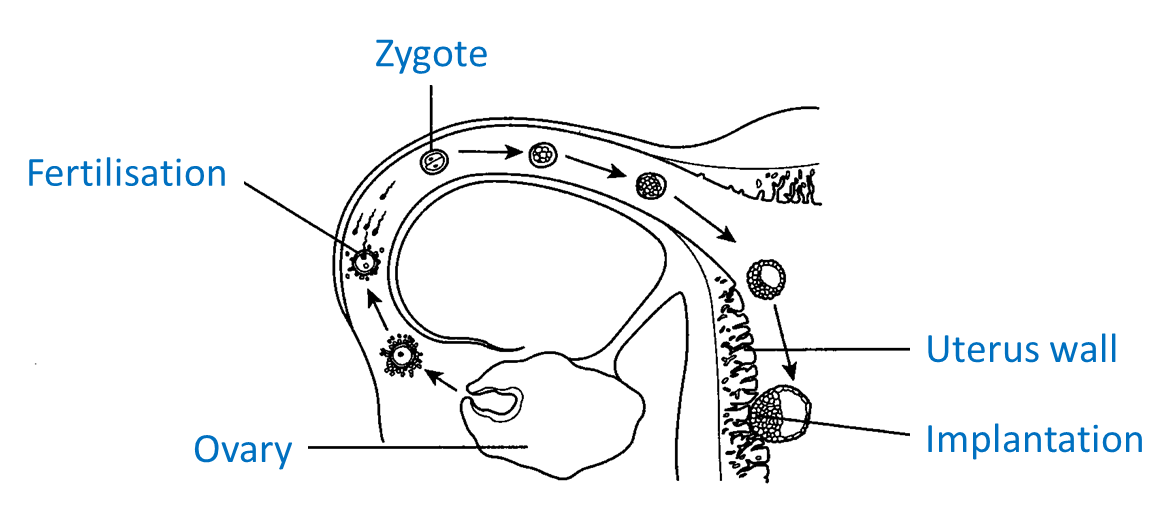

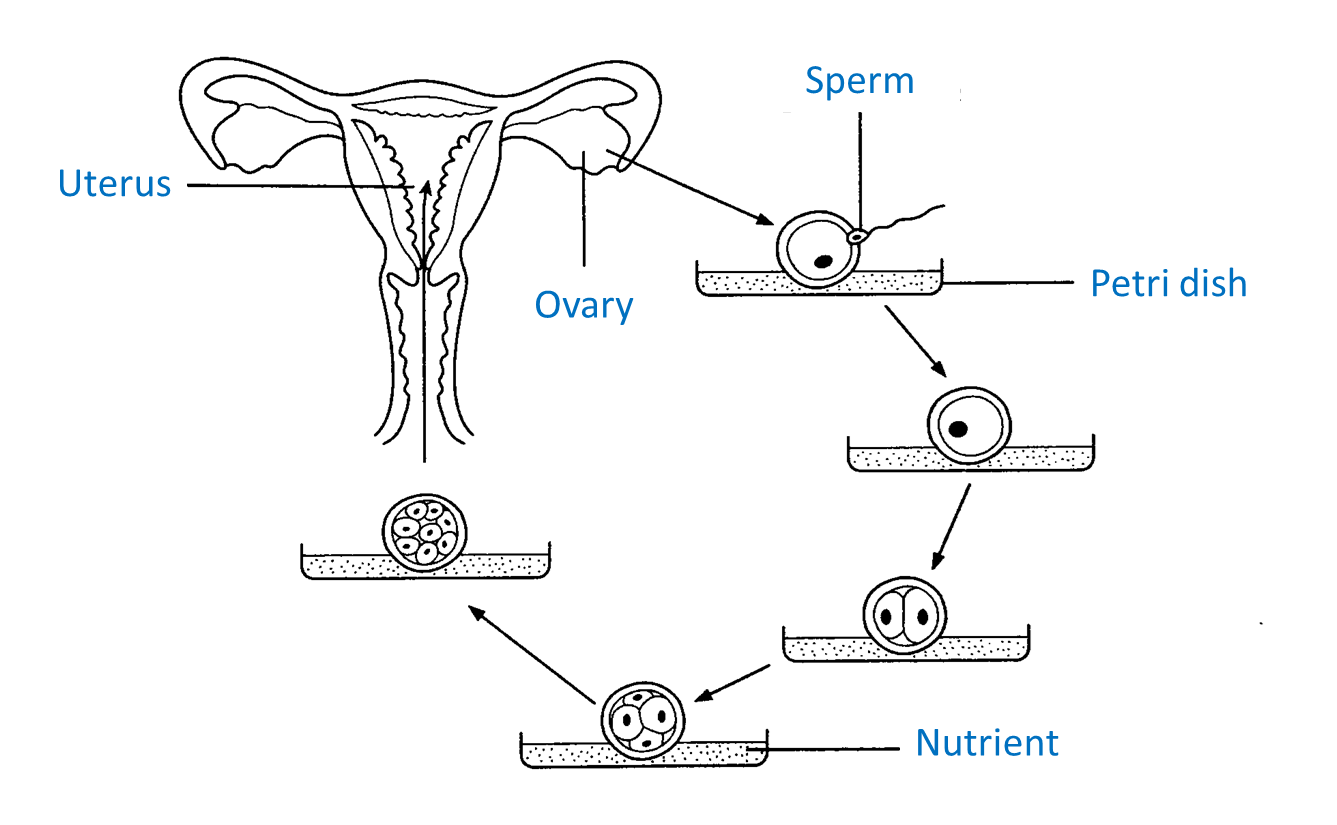

(b) Diagram 2 shows a method to overcome infertility in a married woman with a blockage in both of her Fallopian tubes.

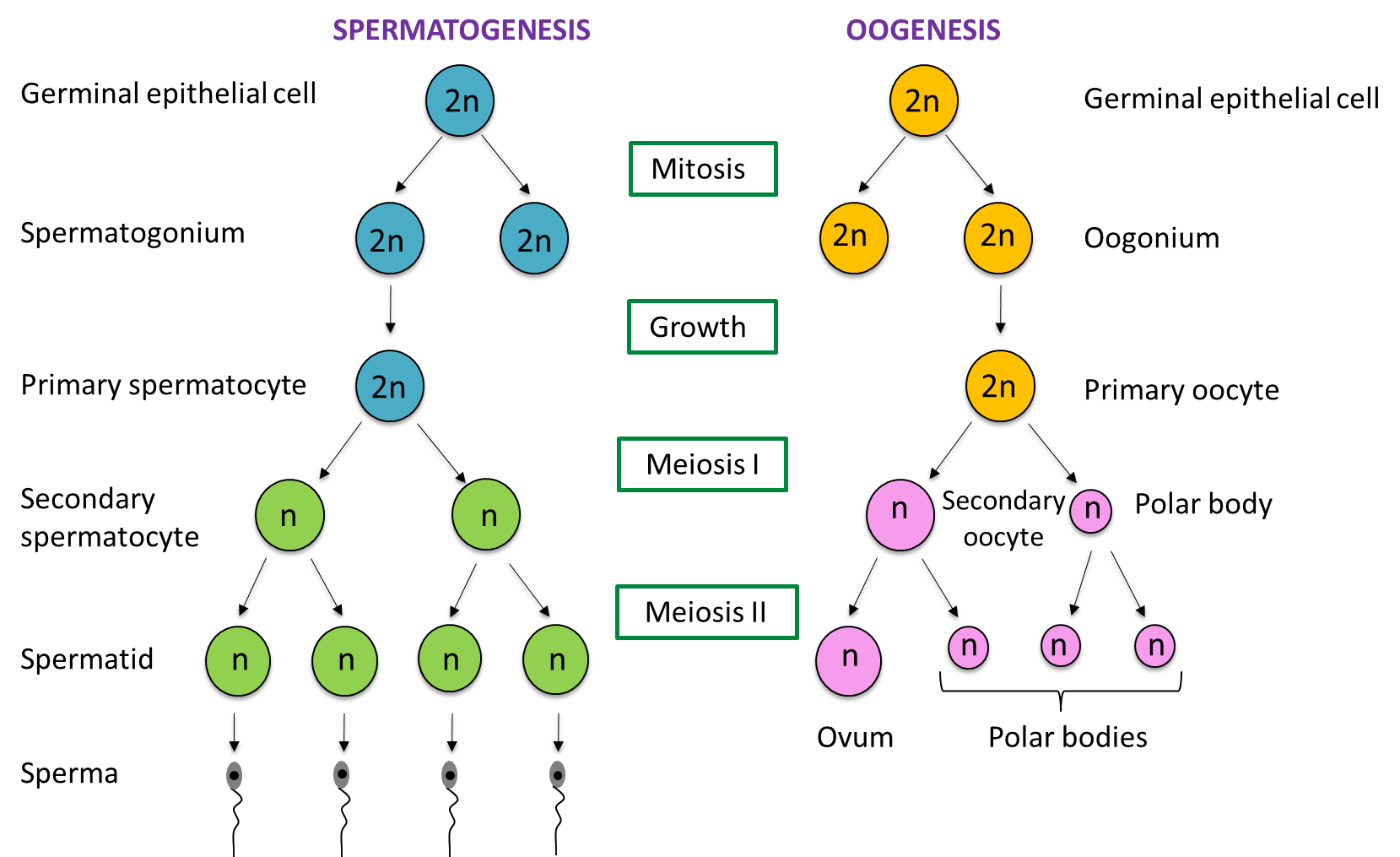

(c) Diagram 3 shows the schematic diagram of spermatogenesis and oogenesis in human.

Solution:

(a) Diagram 1 shows part of a female reproductive system.

The development of zygote occurs in this system.

Diagram 1

Diagram 1

Based on the Diagram 1, describe the development of the zygote after fertilization until implantation. [4 marks]

(b) Diagram 2 shows a method to overcome infertility in a married woman with a blockage in both of her Fallopian tubes.

Diagram 2

Diagram 2

Explain how this method helps this woman to have a baby. [4 marks]

(c) Diagram 3 shows the schematic diagram of spermatogenesis and oogenesis in human.

Explain how this method helps this woman to have a baby. [10 marks]

Solution:

(a)

After fertilization:

- The zygote begins to divide repeatedly by mitosis and travels along the Fallopian tube towards the uterus.

- It forms a two-celled embryo.

- Further division results in the formation of a ball of cells or morula.

- Next, the morula becomes a blastocyst, a hollow ball of cells.

- Implantation of the blastocyst then occurs in the endometrium.

(b)

It is the in vitro fertilization (IVF) method.

- A fine laparoscopy is used to remove a mature ovum from the ovary.

- The ovum is then placed in a Petri dish with a culture solution to mature.

- Then, concentrated sperms from the father are added.

- A sperm and the ovum fuse to form a zygote which develops into an embryo.

- After 2 – 4 days, when the embryo reaches the eight-cell stage, it is inserted into the uterus through the cervix.

- For implantation in the uterine wall.

- The baby conceived is called a test tube baby.

(c)

Similarities:

- Both processes occur in the reproductive organs.

- Both processes involve the process of meiosis.

- Both processes produce haploid gametes that are involved in fertilization.